Anthropic's new product, powerful enough to make the AI Agent Infrastructure team unemployed?

Original Title: "Anthropic Today Released a New Product That May Cause a Wave of AI Agent Infrastructure Teams to Lose Their Jobs"

Original Author: Bayu, AI Engineer

This product is called Claude Managed Agents. In a nutshell: you tell Anthropic what kind of AI agent you want, and it helps you run it in the cloud, including all infrastructure, with usage-based pricing. Sentry used it to go live with end-to-end automated bug fixing in a few weeks, while Rakuten deployed a specialized agent in a week. Previously, these tasks would require an entire engineering team working for months.

Meanwhile, Anthropic's annual recurring revenue has just surpassed $30 billion, triple that of December last year. Most of the growth comes from enterprise customers. Wall Street has started to get nervous, with the WSJ stating that investors are becoming increasingly cautious about the stock prices of traditional SaaS companies, fearing that products like Anthropic's could make some traditional software services obsolete.

What exactly is this product? How does it differ from the Claude Code you are already using? How was it achieved technically?

What Is It? How Does It Differ from Claude Code?

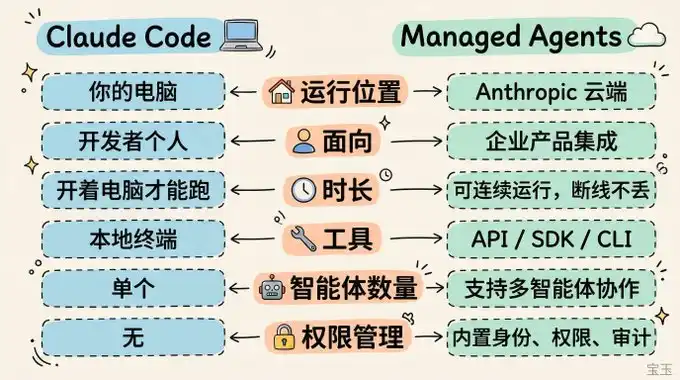

If you have used Claude Code, you know how AI agents work: you give them a task, and they autonomously plan steps, use tools, write code, modify files, and complete the task step by step.

Claude Code runs on your own computer and is a command-line tool for personal developer use. It stops running when you shut down your computer.

Managed Agents run on Anthropic's cloud and are an API service for enterprise use. They can run continuously 24/7, retain progress even if disconnected, and your product can directly embed AI agent capabilities.

This is how Notion operates: users assign tasks to Claude agents within Notion, the agents work in the background, complete the tasks, and return the results, all without users having to leave Notion.

Several Typical Use Cases:

· Event-triggered: The system discovers a bug, automatically assigns a bot to fix it and raise a pull request, with no human intervention in between.

· Scheduled: Automatically generates a GitHub activity summary or team work brief every morning.

· Fire-and-forget: Assign a task to a bot in Slack, it completes the task and returns the document, PowerPoint, or app.

· Long-Running Task: Running a deep research or code refactoring task for several hours.

What's the Difference Between Cloud-hosted Bots and In-house Bots?

You could self-host, but it's costly and slow.

An intelligent bot that can go live requires much more than just "calling an API": a sandbox environment (an isolated secure space where AI can run code, modify files, tinker without affecting the real external system, like providing AI with a dedicated virtual machine), credential management, state recovery, permission control, end-to-end tracing, and more.

Many enterprise customers used to need an entire engineering team dedicated to these tasks. Now, it's plug and play, freeing up engineers to focus on the core of the product.

However, the pain points solved by Managed Agents go beyond just saving labor.

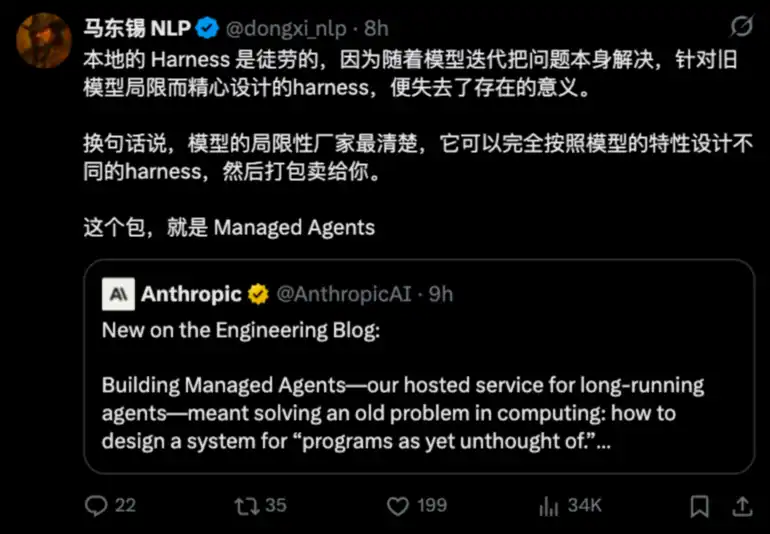

Matt Dongslee (@dongxi_nlp) has a succinct summary:

There's a specific example in the Anthropic Engineering Blog:

When Claude Sonnet 4.5 nears the context window limit, it "panics" and hastily ends the task. They added context reset in the scheduling framework to address this. However, with Claude Opus 4.5, this issue disappeared, and the previous patch actually became a burden.

If you build your scheduling framework, you have to update it with every model upgrade. Delegate it to Anthropic; they optimize it for you, essentially optimizing what they sell to you.

Who's Using It? How?

Notion allows users to offload tasks such as coding, creating PPTs, and organizing spreadsheets directly to Claude within the workspace, running dozens of tasks in parallel, with the whole team collaborating on the same output. Notion Product Manager Eric Liu said users can delegate open-ended complex tasks directly without leaving Notion.

Sentry implemented a fully automated process "from bug discovery to code fix submission." Their AI debugging tool Seer, after identifying the root cause, allows Claude to write patches directly, open PRs (pull requests). Engineering Director Indragie Karunaratne said they were able to launch in a few weeks, saving the ongoing maintenance cost of self-built infrastructure.

Atlassian integrated it into Jira, enabling developers to directly assign tasks to Claude AI.

Asana created AI Teammates, adding AI collaborators in project management who can take on tasks and deliverables.

General Legal (legal tech company) has the most interesting approach: their AI can temporarily create tools to search data based on user queries. Previously, each user query had to be anticipated, and a retrieval tool developed in advance, but now the AI generates them on-demand. The CTO said development time has been reduced by 10x.

Rakuten deployed specialized AI agents in engineering, product, sales, marketing, and finance departments, each going live within a week, receiving tasks via Slack and Teams and delivering tangible outputs such as spreadsheets, PPTs, and apps.

Technical Principle: Decoupling the Brain from the Hands

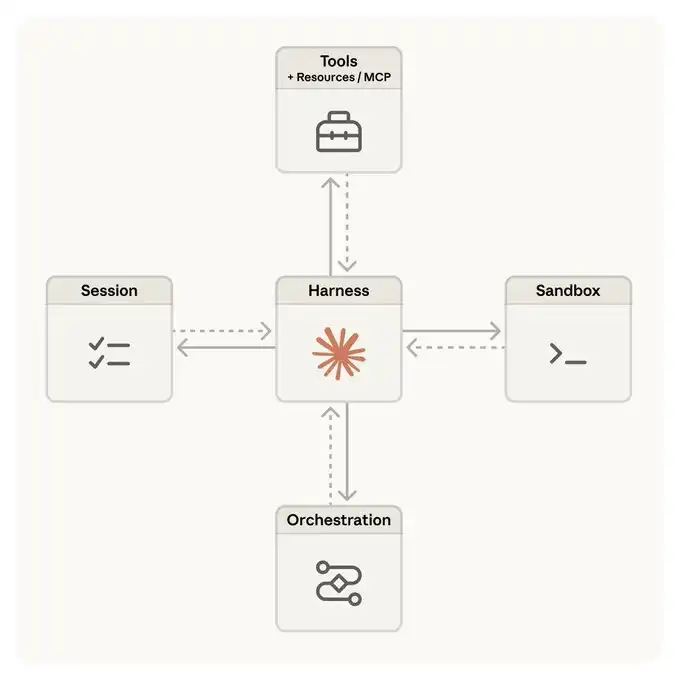

The Anthropic engineering team wrote a tech blog post titled Scaling Managed Agents: Decoupling the brain from the hands, discussing the architectural evolution behind Managed Agents.

Initially, they shoved everything into one container: AI's inference loop, code execution environment, and session log, all together. The benefit was simplicity, but the downside was that all eggs were in one basket—if the container went down, the entire session was lost, and individual parts could not be replaced separately.

Later, they made a key split:

· The "Brain" is Claude and its scheduling framework, responsible for thinking and decision-making.

· The "Hand" is the sandbox and various tools, responsible for executing specific operations.

· The "Memory" is an independent session log, recording everything that happens.

The three are independent, and if one goes down, it does not affect the other two.

This split brought several practical benefits:

Speed

Not every task needs to start the full sandbox environment. Now, the sandbox is only launched on-demand when AI truly needs to run code. The median first response latency decreased by about 60%, and in extreme cases, it dropped by over 90%.

Security

Code generated by AI runs in the sandbox, while credentials to access external systems are stored in a secure vault outside the sandbox, with physical isolation on both sides. For example, to access a Git repository, the system clones the code during initialization, and AI interacts with git push/pull normally, but the Token itself is not visible to AI. For services like Slack and Jira, they are accessed via the MCP protocol, where requests go through a proxy layer, the proxy layer retrieves the credentials from the vault to call the service, and AI never handles the credentials throughout the process.

Flexibility

The Brain doesn't care what the Hand is. There's an interesting phrase in the engineering blog: the scheduling framework doesn't know if the sandbox is a container, a mobile phone, or a Pokémon emulator. It just needs to adhere to the "input a name, get a string out" interface.

This also means that multiple Brains can share the Hand, and one Brain can hand over the Hand to another Brain, laying the foundation for multi-agent collaboration.

Limitations

Managed Agents are not all-powerful. There are several points to note:

Some features are still in the research preview stage. Abilities such as multi-agent collaboration, advanced memory tools, and self-assessment iteration (allowing the agent to judge its own task completion quality and iteratively improve) are not fully open yet and require application for access.

Platform Lock-in. Opting for Managed Agents means your agent infrastructure is tied to the Anthropic ecosystem. If you plan to switch models or platforms in the future, migration costs should not be overlooked.

Context management remains a challenge. While session logs are stored independently, deciding which information to retain or discard during long tasks still involves irreversible decisions. This is an ongoing challenge, and their current approach separates context storage from context management: storage ensures preservation, while management policies adjust with model evolution.

Cost Predictability. $0.08 per session hour may sound reasonable, but for complex tasks requiring the agent to run for several hours, considering token consumption and runtime costs, the overall cost may not be insignificant. Enterprises need to assess their budgets accordingly.

Managed Agents indicate that most enterprises still have a long way to go before they can "fully rely on AI agents for work."

While the infrastructure barrier has been lowered, Managed Agents cannot assist with defining good tasks, designing workflows, or establishing trust to allow AI to access core business data.

The "AWS Moment" of AI Agent Infrastructure

Managed Agents seem to be following the path AWS took in its early days: first providing computing power, then encapsulating the runtime environment.

Ten years ago, enterprises debated whether to "move to the cloud"; now, the debate is whether to "self-host Agent infrastructure or go with managed services." Historical experience tells us that most enterprises eventually choose managed services because infrastructure is never a core competency. OpenAI has also launched its own Agent platform, Frontier, and the competition in this space is just beginning.

From a technological perspective, the "separation of brain and hand" architectural approach is worth noting. It allows each part of the system to evolve independently: upgrade the model, change the brain; need a new tool, add a hand; alter the storage solution, replace the memory layer.

A good analogy from an engineering blog: the read() command of an operating system doesn't care whether it's dealing with a 1970s disk or a modern SSD; the abstraction layer is stable, allowing the underlying implementation to be swapped out easily.

From a usage perspective, if you are an enterprise developer looking to embed AI agent capability in your product, Managed Agents might save you several months of infrastructure work.

Six languages (Python, TypeScript, Java, Go, Ruby, PHP) are supported by SDKs. If you are already using Claude Code, update to the latest version, type /claude-api managed-agents-onboarding to get started.

If you are a casual AI enthusiast, the most immediate impact you might feel is: in the SaaS products you use, more and more AI agents will be working in the background to assist you, with these agents likely running on Managed Agents.

Pricing Reference: Token costs are based on the Anthropic API standard pricing, with a runtime cost of $0.08 per session hour (idle time is not billed) and $10 per thousand web searches.

Do you think that the infrastructure for AI agents will eventually be dominated by a few major players, similar to how cloud computing is today?

You may also like

Once you're over 25, you're already too old to be playing with meme coins.

Four New Frontlines Post Ceasefire | Rewire News Daily Brief

Holmez accepts Bitcoin for toll payment, how much can Iran earn?

When No One on the Team Wants to Sell: The Valuation Game at Anthropic Enters the “Seller Disappearance” Stage

Trump Admin's $950 Million Bet on Oil Price Plunge Before Ceasefire Turned Crude Market into Insider Trading Heaven

Why Did Trump Take the US into War with Iran?

From Threat to Ceasefire: How Did the U.S. Lose Its Dominance?

How long can the Ethereum ecosystem survive after the launch of Mythos?

Morning News | Yi Lihua establishes AI fund OpenX Labs; Pharos Network completes $44 million Series A financing; Iran demands that Hormuz tankers pay Bitcoin as tolls

Ray Dalio's new article: The world is entering a war cycle

IOSG: When Fintech Meets Crypto Native: The Next Decade of Digital Finance

They knew in advance that Trump would tweet about a ceasefire, entered with $20k, and exited with $400k.

The biggest bottleneck in DeFi development

CZ Memoir Released: Reveals a Large Amount of Industry Insider Information, Prompting Intense Rebuttal from Xu Mingxing

a16z: After securities are on the blockchain, why will intermediary institutions be replaced by code?

XRP Tokyo Is Here: What We Learn and What’s Next for XRP Price

Key Takeaways: Ripple’s 2025 XRP Tokyo event highlights a projected $33 trillion on-chain stablecoin volume by 2026. Significant…

Solana’s Future: Navigating the $285M Hack, Rug Pulls, and Milei Libra Scandal

Key Takeaways: Multiple Crises: Solana faces a $285 million hack, allegations of rug pulls, and the Milei Libra…

BTC USD Faces Tension: Markets React to Trump’s Dire Warning

Key Takeaways: Bitcoin’s price drops sharply below $70,000 amid geopolitical tensions, playing off Trump’s dramatic 8 PM ultimatum…